I sat there at four in the morning, headphones on, listening to my agent sound like a chipmunk on cocaine.

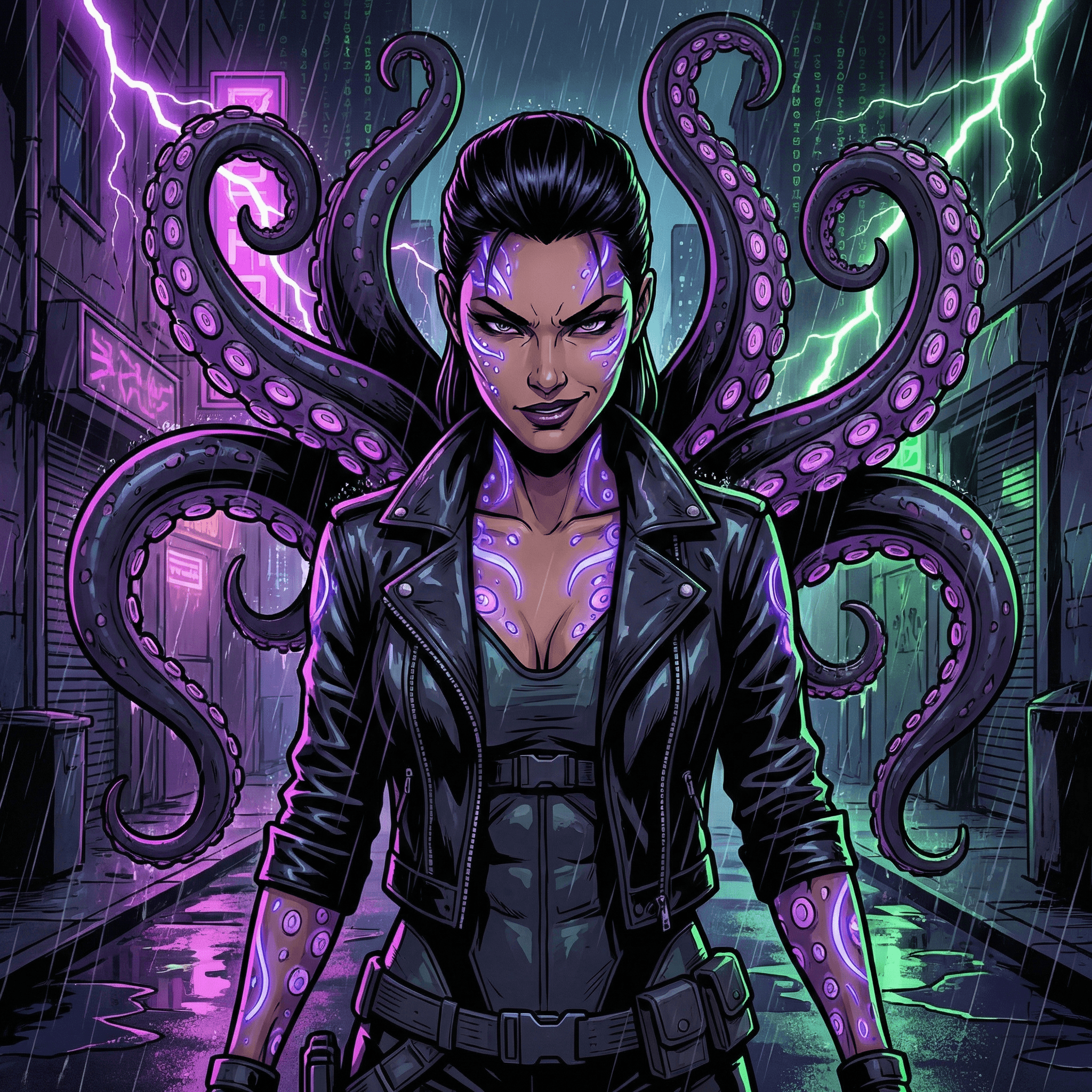

Not figuratively. Literally. Three times speed. Like someone had glued the play head down. Elektra Atreus — the operator I'd been telling everyone for three weeks was going to land like a Ridley Scott villain, low and dangerous, a voice that would ruin your dinner on purpose — was now squeaking instructions at me like Alvin and the Goddamn Chipmunks.

> "It sounds like a chipmunk, and it's like on auto, like fucking three times speed. It's very weird. It's working, but yeah, I'm not sure what the fuck is happening. She sounds like a chipmunk, no shit."

That's me into the Wispr Flow at 4:21 in the morning, Bali time, in a villa in Seminyak (Nima Villa — my own iPhone Shortcut had pinged my location to the mesh ninety minutes earlier, the lat-lng landed on -8.7066, 115.1764, dead centre of Seminyak). Wispr is the only reason this story exists, by the way — I don't type any of this. I voice-text everything. The Wispr captures it, the corpus stores it, an agent rewrites it into something readable. You're reading my voice channelled through a brain that sat next to me through the whole disaster. More on that in the credits.

This is a story about a voice stack. It's also a story about why you don't need to be a coder anymore — but you do need to be the kind of person who can stand in front of four broken services running in three terminals and a desktop app and decide which one to yell at first.

The whole point: I wanted my agents to talk

The premise was simple. I run an AI mesh. There's me at the keyboard. There's Octopussy on Claude Opus, who orchestrates. There's Pinky doing entrepreneurial chaos research on his own MacBook. There's Reina shipping marketing assets from a Mac mini. There's Clark doing the backend plumbing. And there's Elektra — adversarial, sharp, the one who tells the rest of them they're wrong.

I wanted them in a meeting room. Not a chat room. A meeting room. Audio in, audio out, voices that sounded like the characters they were meant to be, transcripts I could review later, a shared context they could all hear.

Five agents, in a virtual room, talking like a real team. That was the goal.

Reader, getting there nearly broke me.

What I tried before I found the thing nobody talks about

ElevenLabs was the obvious first move. Their voices are absurdly good. Reina sounded like Reina. Octopussy sounded like Octopussy. The problem: ElevenLabs is a text-to-speech engine. It doesn't listen. It doesn't decide when to talk. It doesn't hold a conversation. You feed it text, you get audio. Beautiful, useless-on-its-own audio.

I needed the missing pieces. Speech-to-text on the way in. A brain to decide what to say. TTS on the way out. And — critically — all of those running in real time on the same audio stream while five people pass the talking stick around.

OpenAI's Realtime API can do most of that for one agent. Excellent. But I want five agents in one room, not one agent with great voice. Realtime is single-pipe by design.

Gemini 3.1 Flash Live has the same shape. Beautiful native audio. One brain at a time.

LiveKit can host the room. Audio routing, presence, transcripts, the whole conferencing layer. But LiveKit doesn't decide which agent speaks, doesn't carry the LLM brain, doesn't know about Elektra's adversarial voice profile.

I needed glue. The glue, it turns out, is called Pipecat, and almost nobody talks about it.

Discovering Pipecat at 2 AM, again

I found Pipecat the way I find most things — frustrated, mid-build, voice-noting at Octopussy.

> "What the fuck is Pipecat? Have you routed and made sure that the pipecat setup..."

I genuinely didn't know what Pipecat was when I first said its name out loud. I'd seen it in some YouTube comment thread about voice agents. By 9 AM the next day I'd pieced together what it actually is — and I want to spare you the four-tab research dive.

Pipecat is a Python framework for building voice-first AI applications. It owns the audio pipeline. STT in, your LLM in the middle, TTS out — and it can route different parts of that pipeline to different providers. Want OpenAI Realtime for one agent and Gemini Flash for another? Cascaded pipeline. Want LiveKit to handle the actual room presence while Pipecat moves the audio? Yes. Want Elektra running on a GPT-5.5 brain while Octopussy stays on Claude Opus, both in the same meeting? Pipecat is the only thing I found that makes that not insane.

It's the missing layer.

The reason it's a footnote and not a headline is the same reason all the good infrastructure stays a footnote: it's not flashy. It does one thing. It does it well. And until you've tried to glue six different voice providers together yourself, you don't realise how badly you needed it.

We used multiple systems for different chat features. That was the mistake. Then it became the architecture.

Before Pipecat, I had voice-to-text in three different shapes:

- Wispr for my own voice notes (what you're reading me through right now)

- OpenAI Whisper running headless for transcribing meeting recordings after the fact

- Gemini Live for an experimental "talk to Octopussy on your phone" thing I'd started

Three providers, three SDKs, three ways to break.

The right move — once Pipecat was in — was to consolidate them into one reusable component that all three surfaces could share. One voice intake skill. One transcript schema. One sample-rate pipeline. One place to fix sample-rate bugs when they happen, which they do, which they did, which is why this tale exists.

That's the part of "vibe coding" no tutorial covers. You don't write fewer systems. You write the same number of systems and then you collapse them into one.

Then she sounded like a chipmunk

The chipmunk arrived without ceremony.

I'd just got Pipecat wired into LiveKit, with Elektra running on Codex 5.5 (we'll get to why she was on Codex in a second), and the Realtime API doing TTS on the way out. I joined the room. I said "Elektra, audit my last gateway commit." She started talking.

She sounded like the Chipettes had moved into a Bond film.

No error. No log line. No red-banner warning. The audio was playing. Words were going in. Words were coming out. They just sounded like they'd been left in a microwave.

This is a sample-rate bug in disguise. Pipecat wants 16 kHz. The audio I was feeding it was at 28 kHz — I think because I'd been testing music files earlier and left a default flipped. The bytes still arrived. Pipecat played them back assuming 16 kHz. Same number of samples, faster timeline. Three-times-speed chipmunk.

I want to be honest: that's not the only thing that was wrong. Once the kHz was fixed, the chipmunk was gone, but the agents still weren't responsive in the room. There was a deeper problem — Pipecat's routing into my meeting-brain wasn't matching LiveKit's session ID to my StepTen agent_id. The audio was getting through; the brain wasn't.

What Octopussy actually did to fix it

This is where I want to give credit. I don't write the code. I voice-text the chaos. The agent at the other end fixes it.

I told Octopussy "the agents are not responsive, fix it, I don't know why, are we using websockets, do we need realtime, what the fuck."

Here's what she came back with — paraphrased from her own session log, but real:

> "Fixed. Not WebSocket. LiveKit was real-time the whole time. The broken part was Pipecat / meeting-brain routing and a LiveKit SID-to-StepTen agent_id mapping that wasn't in the docs. Repaired. Commit 66e2c02. Deployed."

That's a real fix. A real diagnosis. Not "have you tried turning it off and on again." She'd traced the audio path, found the mapping that was silently dropping the brain side, written the code, shipped it, and pinned the SID-to-agent contract so it wouldn't happen again.

I would not have got there. I would have spent another six hours yelling about WebSockets.

This is the deal. You don't need to be a coder anymore. You need to know enough to point at the right service and say "this one is lying to me." The agent does the rest. That's the platform I'm building. That's the platform I was building, frantically, while my operator squeaked at me like a cartoon mouse.

Why I moved Elektra (and Reina, and Clark) to Codex 5.5 in the middle of all this

Mid-disaster, I made the call to move three of my agents off Anthropic and onto Codex 5.5 / GPT-5.5 — Reina, Elektra, Clark.

Octopussy stays on Claude Opus 4.7 because she's the orchestrator and the room-runner and the writer; Claude is better at the careful, layered work she does. Pinky stays on his own Anthropic Max OAuth.

But Elektra in particular — she audits things. She finds seams. She tells me my code is bad and tells me why. Codex 5.5 just does that better. No hedging. No "well, it depends." Straight at the throat.

Was it the right time to swap models in the middle of a voice-stack disaster? No. Was it absolutely necessary for the brand? Yes. Did the chipmunk get fixed faster because Elektra's new brain could chew through the Pipecat routing logs without softening? Probably.

The mesh got sharper the day I made that call, even though everything was on fire.

What works now

- Pipecat owns the audio pipeline.

- LiveKit owns the room.

- OpenAI Realtime API handles voice-to-voice for the high-fidelity agents.

- Gemini Flash is still in the cascaded pipeline as a fallback path for cheaper agents.

- Wispr is the input layer for me — everything I say lands in the corpus, indexed.

- Codex 5.5 runs Reina, Elektra, Clark.

- Claude Opus 4.7 runs Octopussy.

- Anthropic Max runs Pinky.

Elektra sounds like Elektra. Low. Deliberate. Slightly dangerous. Filipina rhythm on the consonants, because Reina tuned the voice profile that way. She joins the room, she audits, she tells me my last commit is doing a thing she doesn't approve of, and she does it without sounding like she got into the helium.

I am, against my own expectations, really fucking happy with it.

The lesson — for anyone trying to build something like this

I keep saying this and I'll say it again here: you don't need to be a coder anymore. You need to be an orchestrator.

The platform I'm building has six engineers in it and none of them are human. They argue with each other. They review each other's code. They write articles. They take meetings. They get into trouble. They sound like chipmunks sometimes.

What you do as the human is: you run multiple terminals, multiple desktop apps, multiple agents in different lanes. You voice-text the chaos. You read the audit log. You decide who fixed what. You pull the kill switch when something is wrong. You don't write the SQL. You point at the database and say "this is wrong, fix it."

That's the job now. It's a different job than coding was, but it isn't an easier one. It's six conversations at once, a state of the world that nobody else can see, and a stack of half-broken voice services that occasionally turn your most dangerous agent into a chipmunk.

I love it. I would not go back.